Research

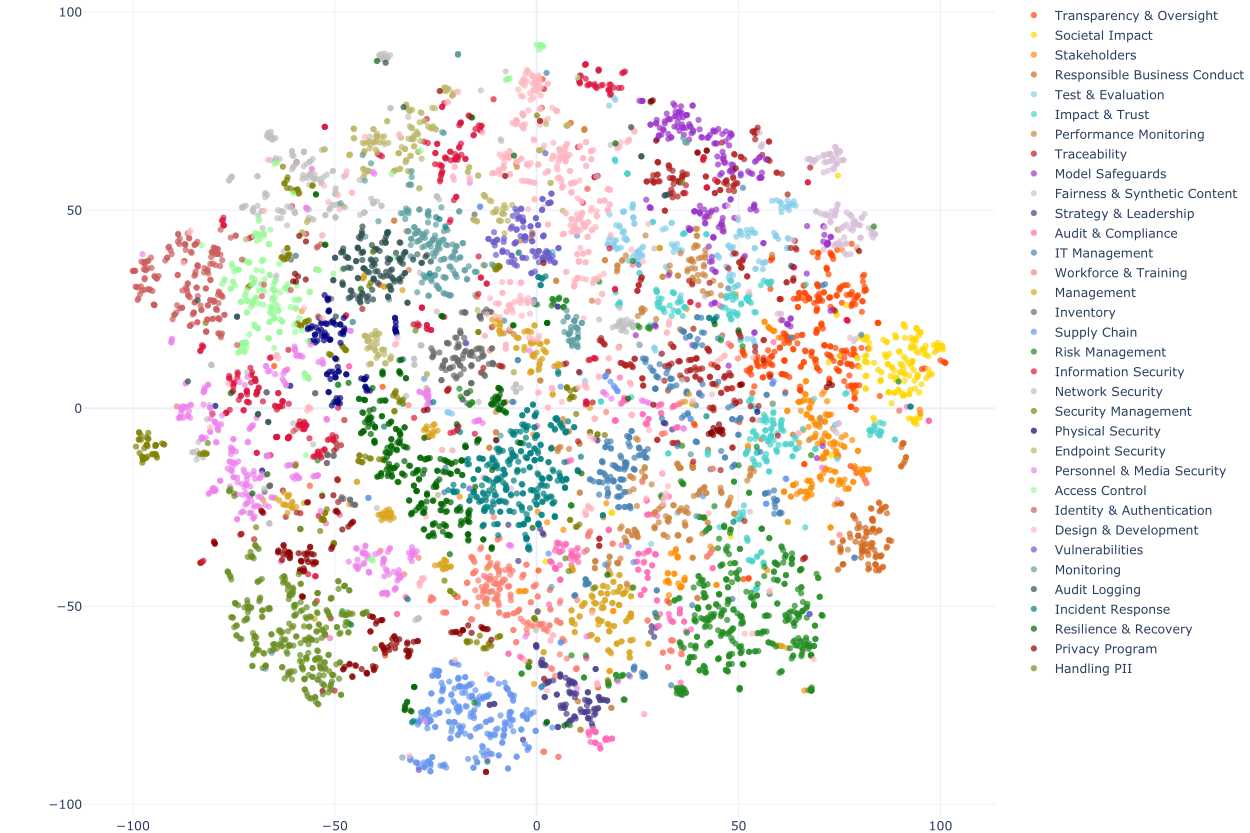

Practitioners currently face an overwhelming amount of information when it comes to implementing AI best practices. The myriad of existing standards and frameworks place an enormous burden on organizations to decipher this guidance on their own—requiring time, resources, and expertise that many organizations, particularly smaller ones, cannot afford. To address this challenge, this project 1) harmonizes guidance from over 52 reports and frameworks, 2) operationalizes best practices by providing a comprehensive reference guide for implementation, and 3) tailors guidance to specific use cases.

Kyle Crichton, Abhiram Reddy, Jessica Ji, Ali Crawford, Mia Hoffmann, Colin Shea-Blymyer, and John Bansemer. "Harmonizing AI Guidance: Distilling Voluntary Standards and Best Practices into a Unified Framework" (CSET 2025). View Here

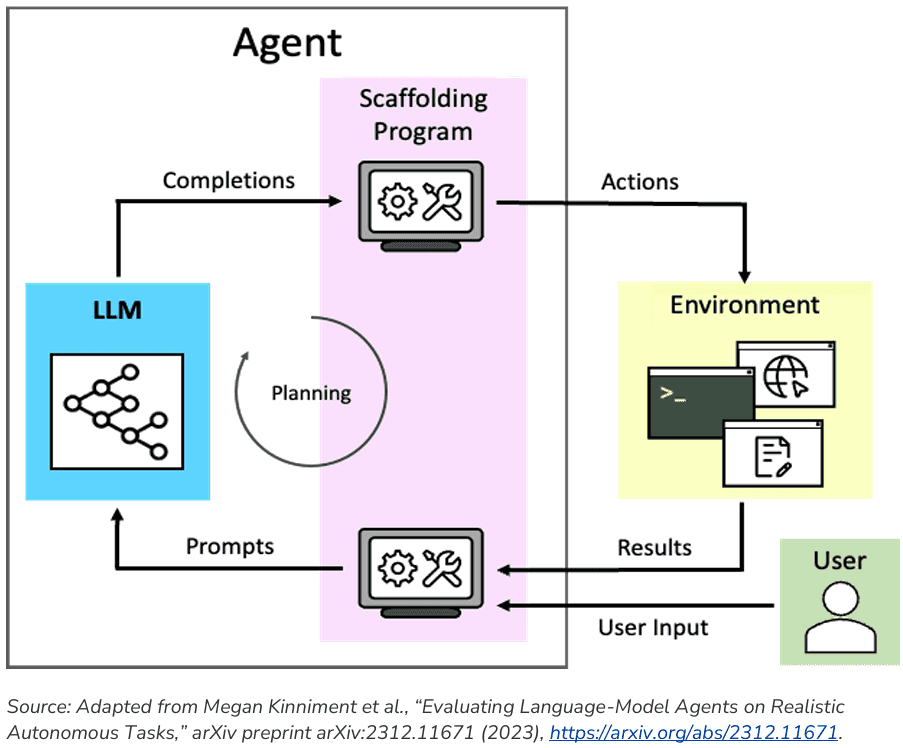

This body of work pertains to some of the core research activities conducted as part of the CyberAI team at CSET that focuses on the cybersecurity implications of and applications to artificial intelligence. This includes past and on-going work on how to keep AI systems secure when deployed in highly-sensitive critical infrastructure environments and the cybersecurity tools that can be used to protect and control autonomous AI agents.

Kyle Crichton, Jessica Ji, Kyle Miller, John Bansemer, Zachary Arnold, David Batz, Minwoo Choi, Marisa Decillis, Patricia Eke, Daniel M. Gerstein, Alex Leblang, Monty McGee, Greg Rattray, Luke Richards, and Alana Scott. "Securing Critical Infrastructure in the Age of AI" (CSET 2024). View Here

Helen Toner, John Bansemer, Kyle Crichton, Matthew Burtell, Thomas Woodside, Anat Lior, Andrew Lohn, Ashwin Acharya, Beba Cibralic, Chris Painter, Cullen O’Keefe, Iason Gabriel, Kathleen Fisher, Ketan Ramakrishnan, Krystal Jackson, Noam Kolt, Rebecca Crootof, and Samrat Chatterjee. "Through the Chat Window and Into the Real World: Preparing for AI Agents" (CSET 2024). View Here

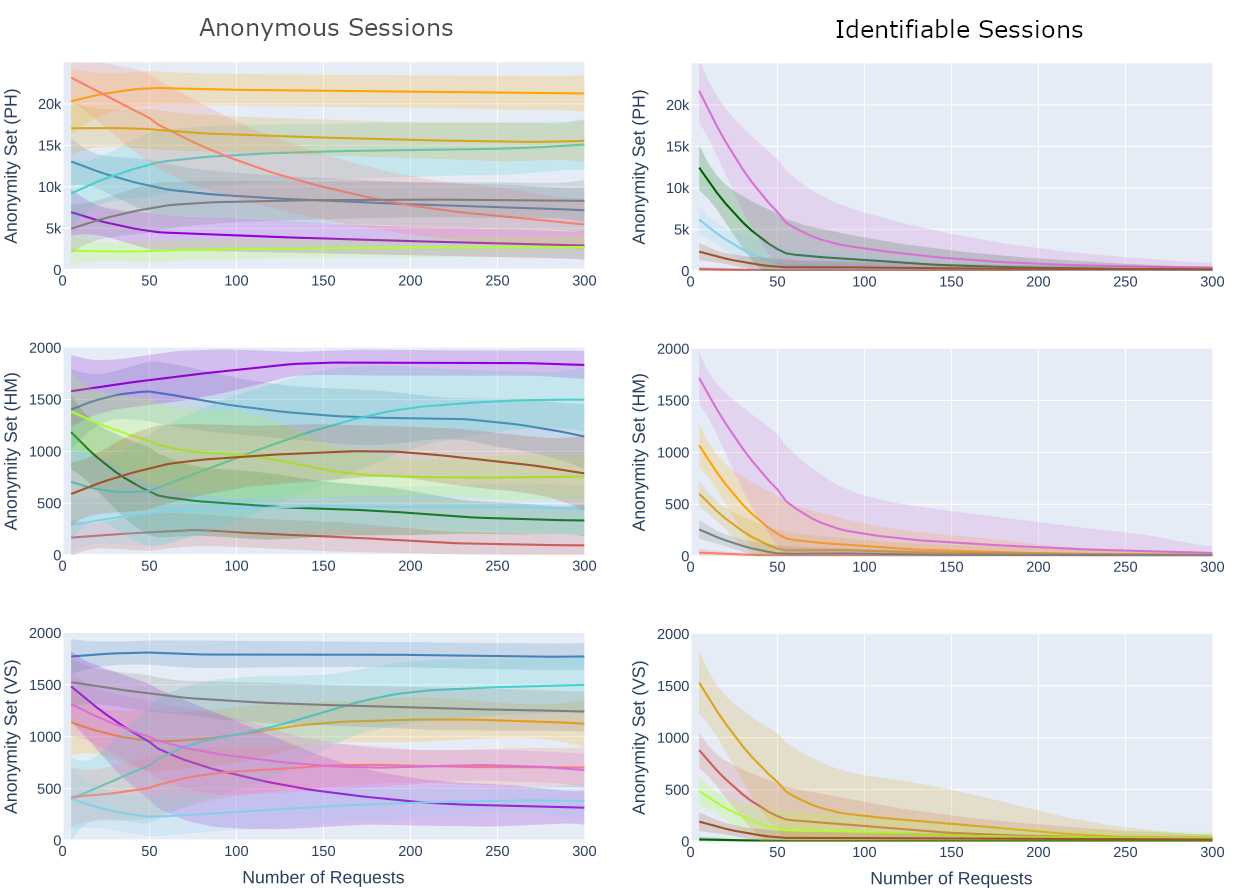

This area of research focuses on the privacy harms that arise from the use of machine learning in online tracking applications. I have two papers related to this topic. The first examines the use of behavioral fingerprinting (i.e., tracking based on the web pages you and I visit) that can enable an adversary to conduct more persistent, long-term web tracking by linking together disparate user identifiers (e.g., browser cookies or IP addresses). The second examines how sensitive browsing information (e.g., visits to websites containing information related to health conditions, abortion, LGBTQ+ content, and immigration status) are systematically leaked through online advertising profiles, posing substantial risk to vulnerable communities.

Kyle Crichton, Nicolas Christin, and Lorrie Faith Cranor. "Rethinking Fingerprinting: An Assessment of Behavior-based Methods at Scale and Implications for Web Tracking". Privacy Enhancing Technologies Symposium (PETS 2025). View Here

Kyle Crichton, John Krumm, and Siddharth Suri. "Inferring Sensitive Browsing Information from Online Advertising Profiles". In Submission.

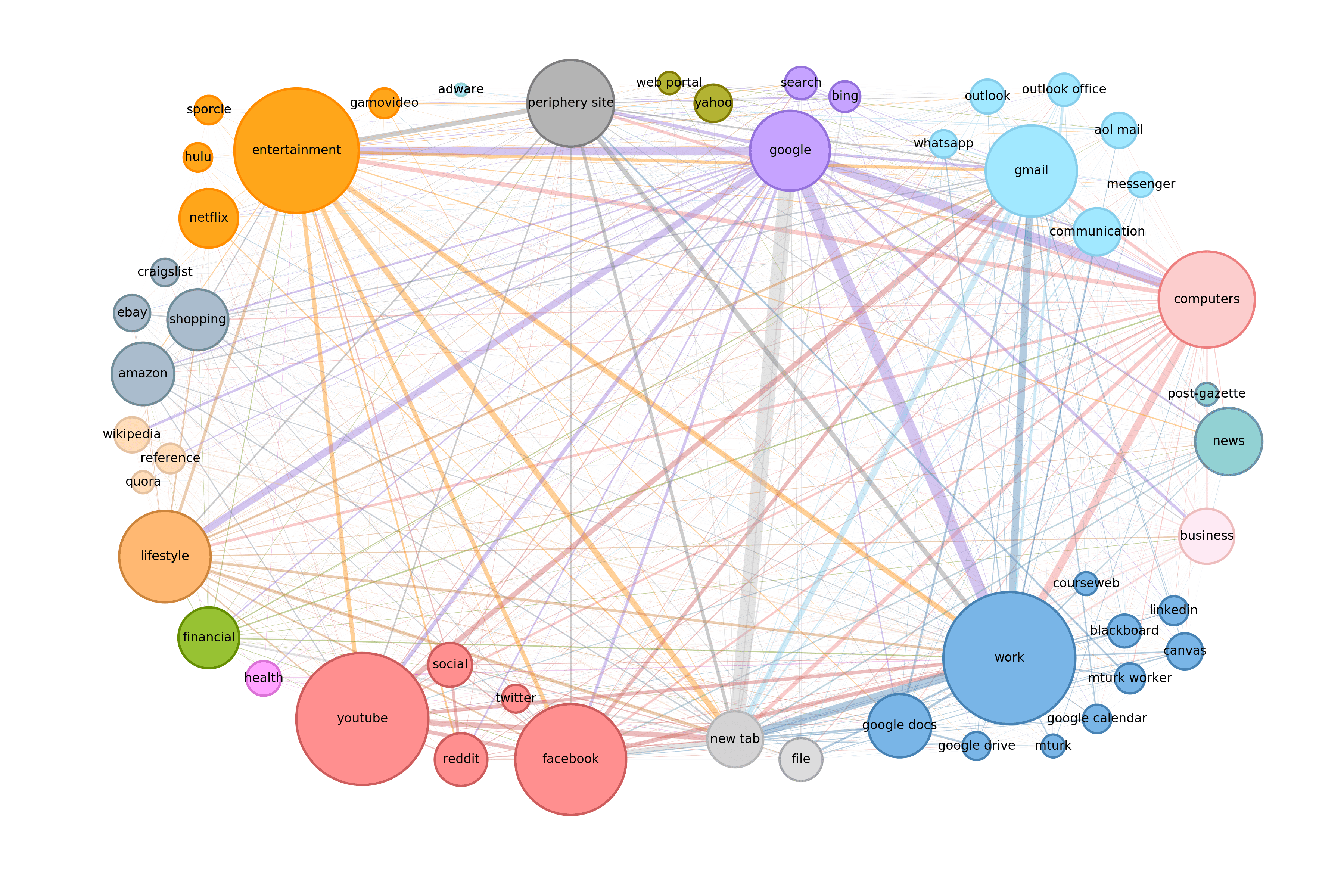

Data on how people browse the web is abundantly collected, but rarely distributed due to its proprietary nature. As a result, our understanding of user web browsing primarily derives from a set of small-scale studies conducted over a decade ago. Using resources like the Security Behavior Observatory, this work provides a more detailed and recent snapshot of user browsing patterns allowing us to better understand how people navigate and spend their time online. We find that user browsing is highly centralized, with over 50% of browsing time dedicated to a mere 32 websites. However, users also spend a disproportionate amount of time on websites ranked outside of the top 10 million, areas known to have a higher risk of containing risky and malicious content.

Kyle Crichton, Nicolas Christin, and Lorrie Faith Cranor. "How Do Home Computer Users Browse the Web?" ACM Transactions on the Web (TWEB 2022). View Here

Akira Yamada, Kyle Crichton, Yukiko Sawaya, Jin-Dong Dong, Sarah Pearman, Ayumu Kubota, and Nicolas Christin. "On recruiting and retaining users for security sensitive longitudinal measurement panels". Symposium on Usable Privacy and Security (SOUPS 2022). View Here

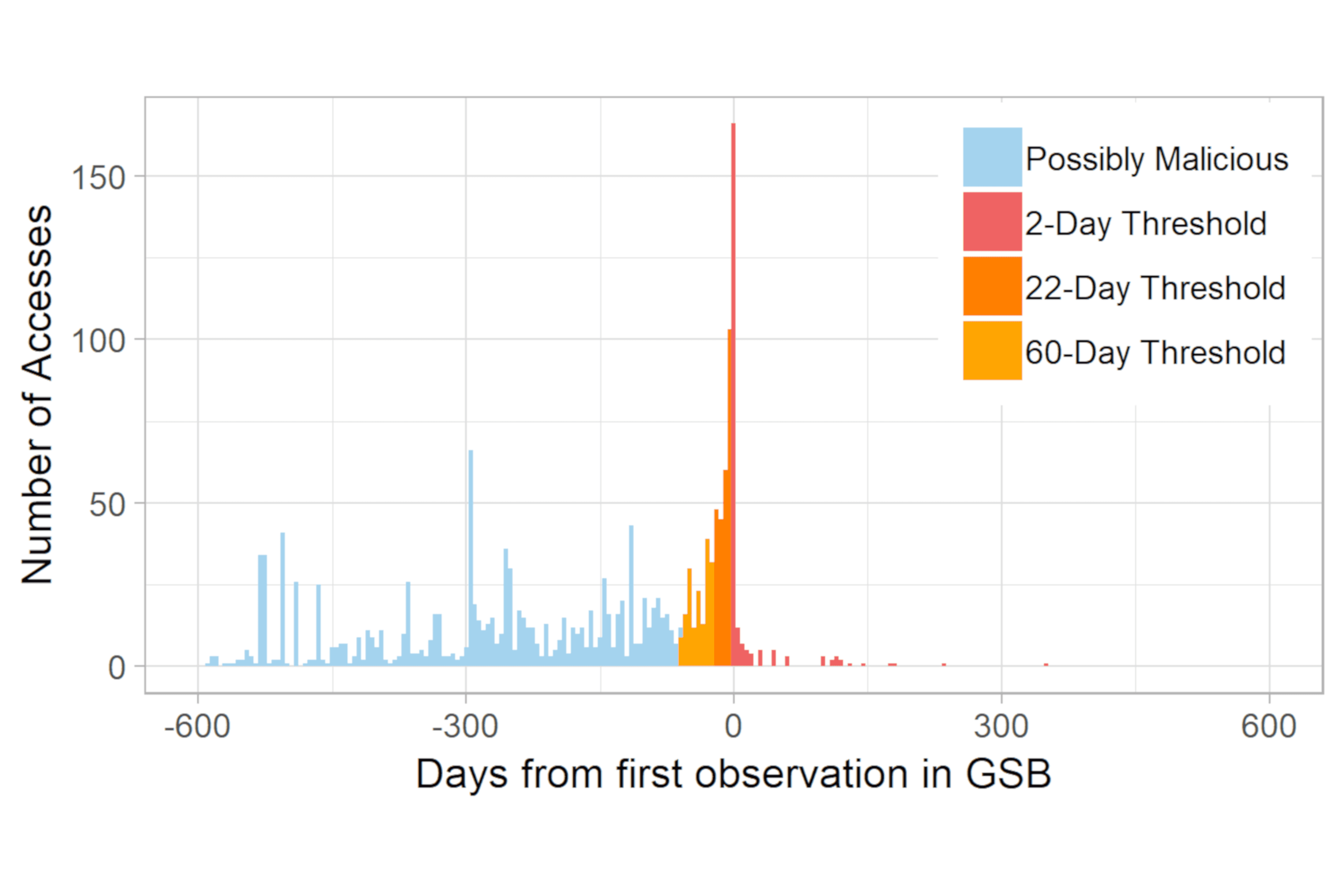

To keep users safe online, current protections frequently employ blocklists of known malware and phishing websites. However, such defenses suffer from an inherent gap between malicious content creation and its detection, leaving a window where users are left vulnerable. To address this limitation, our work introduces a method of detecting exposure based on a user's web browsing. Using sequential temporal modelling, our work not only improves classification performance by a significant margin (between 93% and 145% F1-score improvements, and 15-18% AUC improvements) over previous models, but also maintains strong robustness across completely disparate sets of users. Furthmore, our models show strong resilience to concept drift, as their performance holds steady over multiple years of testing.

Jin-Dong Dong, Kyle Crichton, Akira Yamada, Yukiko Sawaya, Lorrie Cranor, and Nicolas Christin. "Accurate, Generalizable, and Practical Behavioral Models to Identify Impending User Exposure to Malicious Websites". ACM Transactions on the Web (TWEB 2025). View Here

In the design of qualitative interview studies, researchers are faced with the challenge of choosing between many different methods of interviewing participants. While previous work has anecdotally compared the advantages of different online interview methods, no empirical evaluation has been undertaken. To fill this gap, we conducted 154 interviews with sensitive questions across seven randomly assigned conditions, exploring differences arising from the mode (video, audio, email, instant chat, survey), anonymity level, and scheduling requirements. We find several qualitative differences across mode related to rapport, disclosure, and anonymity. However, we found little evidence to suggest that interview data was impacted by mode for outcomes related to interview experience or data equivalence. The most substantial differences were related logistics where we found substantially lower eligibility and completion rates, and higher time and monetary costs for audio and video modes.

Maggie Oates, Kyle Crichton, Lorrie Cranor, Storm Budwig, Erica J. L. Weston, Brigette M. Bernagozzi, and Julie Pagaduan. "Audio, video, chat, email, or survey: How much does online interview mode matter?" PLOS One (2022). View Here